AI Proxy

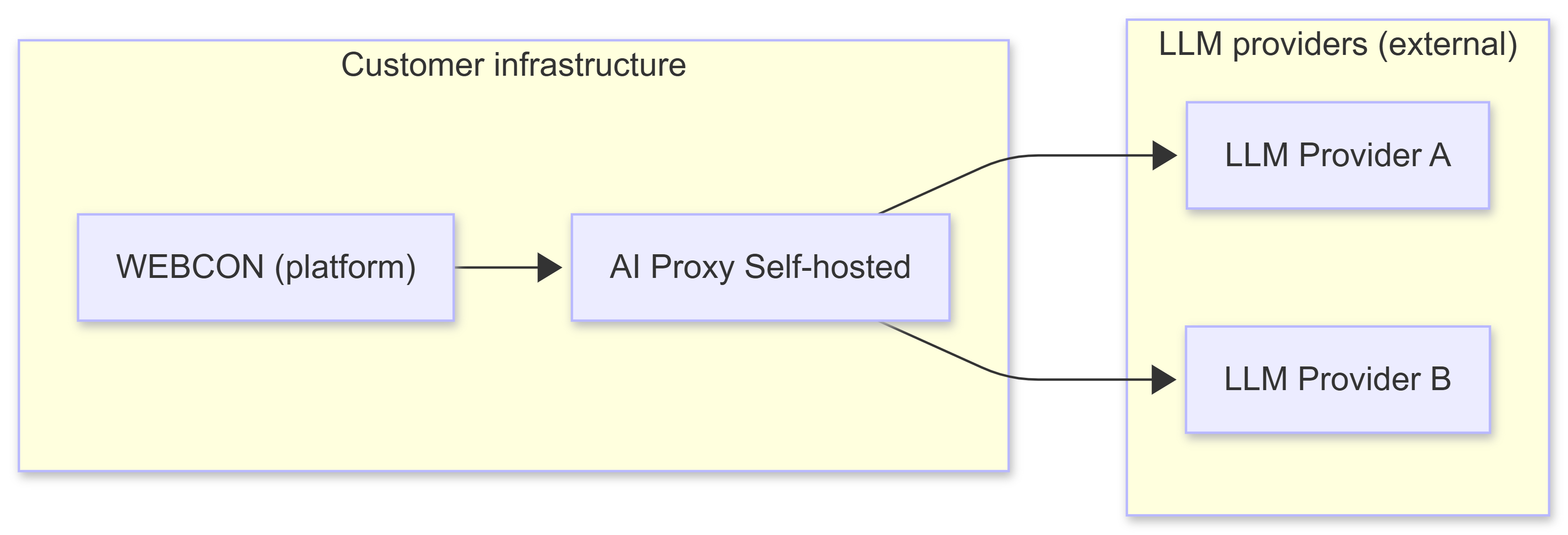

AI Proxy Self-hosted is a solution that enables the use of AI functionality in WEBCON on the customer’s own infrastructure (on-premises or private cloud), without requiring WEBCON infrastructure to process requests. The component acts as an intermediary layer between WEBCON and external AI model providers: it receives requests from WEBCON, enforces security and configuration rules (e.g., provider/model selection, authentication, certificate handling), then forwards the requests to the selected AI services and returns the response to WEBCON. The solution was created for organizations that need greater control over data and the runtime environment and want to more easily meet security, privacy policy, and industry regulatory requirements—while maintaining flexibility in choosing providers and models.

What are AI Proxy and AI Proxy Self-hosted?

AI Proxy is a modular, cloud-native .NET 8 application designed to provide secure, scalable, and extensible access to artificial intelligence capabilities. The solution acts as an API Gateway, integrating with multiple external AI services, including:

- Google Gemini - Google AI models,

- Azure AI Foundry - Azure AI services,

- OpenAI - GPT language models.

High-level architecture

Below is a summary of the components that make up the AI Proxy (at a high level).

Core components

- AI Proxy - the main application that exposes endpoints, integrates with external AI services, and manages user requests,

- Application Gateway - provides secure user access by routing traffic to AI Proxy in production environments,

- Integration VNET - enables secure communication between AI Proxy and Azure resources (Azure SQL, Key Vault, Storage Account).

Azure resources

- Azure SQL - stores the application’s persistent data,

- Dedicated Key Vault - securely manages secrets and credentials,

- Storage Account - supports file and data storage needs,

- Application Insights - collects telemetry and monitoring data for diagnostics and performance tracking.

External integrations

- AI services - integration with Gemini, OpenAI, and other AI providers.

Modular architecture

AI Proxy is divided into modules, which makes it easier to add new integrations and maintain the code:

- AiConnector modules - an abstraction layer for AI providers (OpenAI, Gemini, Vertex AI),

- AiTools modules - Agent/Concierge processors, model selection tools, validators,

- Infrastructure - shared components (authentication, database, Key Vault, logging, telemetry).

Design patterns

The project uses, among others:

- Minimal APIs

- Endpoint Filters

- Factory Pattern - dynamic selection of a provider/tool,

- Configuration Providers:

aiconfiguration.jsonfor AI models,appsettings.jsonfor infrastructure.

AI Proxy Self-hosted

AI Proxy Self-hosted is a deployment variant that can be run locally or in a customer-managed environment without the full Azure infrastructure. This mode is particularly useful for:

- development environments - local testing and development without cloud costs,

- on-premises deployments - running within the customer’s own infrastructure,

- testing and demos - rapid prototyping without full Azure configuration.

Differences in Self-hosted mode

Configuration:

- set

AppConfiguration__SelfHosted__Enabled=truevia environment variables, - SSL/TLS certificates managed locally (.pem or .pfx files),

- can operate without Azure Key Vault (secrets stored in configuration).

Infrastructure:

- no Application Gateway requirement,

- runs in a Docker container.

Example use cases:

- local development work,

- test environments,

- customer Proof-of-Concept,

- isolated On-Premise environment.

Security practices

Below are the practices and elements that usually matter most during deployments:

- secrets and sensitive data are stored in Azure Key Vault,

- communication between components is secured via VNET integration and service endpoints,

- Application Gateway provides an additional layer of security and traffic management in production,

- endpoint-level authorization and licensing mechanisms.

Extensibility

A modular project helps with the development of the solution:

- the modular design makes it easy to add new AI services or endpoints,

- connector and processor factories enable integration with future AI providers,

- flexible configuration of models and priorities.

Monitoring and diagnostics

- Application Insights provides real-time telemetry, error tracking, and performance metrics,

- logs and metrics support proactive diagnostics and continuous improvement,

- OpenTelemetry support for advanced observability.